Do You Have a Data Lake Problem?

Let’s be aware what does “data lake” means before we start this article.

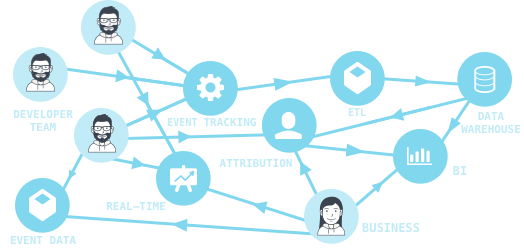

A data lake is a method of storing data within a system or repository, in its natural format, that facilitates the collocation of data in various schemata and structural forms, usually object blobs or files. The idea of data lake is to have a single store of all data in the enterprise ranging from raw data (which implies exact copy of source system data) to transformed data which is used for various tasks including reporting, visualization, analytics and machine learning. The data lake includes structured data from relational databases (rows and columns), semi-structured data (CSV, logs, XML, JSON), unstructured data (emails, documents, PDFs) and even binary data (images, audio, video) thus creating a centralized data store accommodating all forms of data.

I’m quite aware first question comes on your mind is probably building. Likewise; building a tool/product for “data lake” is not easy. But with the right architecture it is possible to consolidate data in a single large repository & dashboard — place and run many different workloads and application stacks at the same time (different integrations, custom integrations etc) while giving the best user experience to end users.

Old way

Question is here: Does your company or start-up or agency wants to be part of the future or part of the past?

There’re several reasons for build an internal analytics service always which we’ve been facing it lately with enterprise clients. Apparently it’s not an easy task as well and huge risk and takes a lot of time. That’s why our suggestion on this occasion to start from the beginning and try to understand data from early stage. For Instance more than 100K visits / downloads either for a web page or mobile app is a good start based on our experience.

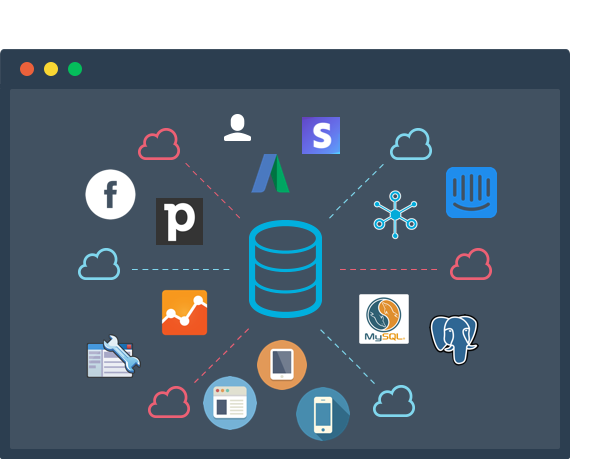

New way. No coding and integrate in seconds

What kind of reasons could be helpful for SMB or Start-ups on this case?

- Everyone thinks and sometimes need to store their data on their servers so that have the full control on it.

- Obviously nobody wants to spend too much time to develop a data pipeline solution.

- Lastly of course you want to be able to customise your data analytics service when you need to.

- Cost effective (yes you won’t regret! :))

That simply shows and explain why a start-up or an SMB needs rakam. We’ve been working with Scorp more than 6 months so far and here you go what they are thinking about rakam.

“With Rakam.io we’re able to process our custom event data with ease. Being able to write our own custom reports allows us to created detailed reports. We can segment users based on complicated action history and see user engagements and retention for each user segment. The rakam BI panel allows us to create useful reports with graphs and tables easily which saves us the trouble of developing extra analytics panel.” Kaan Uğurlu | Scorp — Co-Founder

Let us find the best data analytics solution for you and solve your all issues with rakam. Check it out our demo as well: rakam.io/demo